At Origen, we believe building powerful AI solutions shouldn't be limited to companies with massive budgets.

Thanks to the growing open-source AI ecosystem, it’s now easier than ever to create, test, and scale advanced AI tools—faster, cheaper, and without getting tied to a single vendor.

Why Open-Source AI?

Here’s why the open-source AI stack is a game-changer for modern businesses:

✅ Cost-Effective – Say goodbye to expensive APIs and per-token charges.

✅ Customizable – Tailor models to your domain, workflows, and values.

✅ Transparent – Know what your AI is doing and why.

✅ Scalable – Deploy locally, on your cloud, or in hybrid environments.

✅ No Vendor Lock-In – You're not tied to one provider forever.

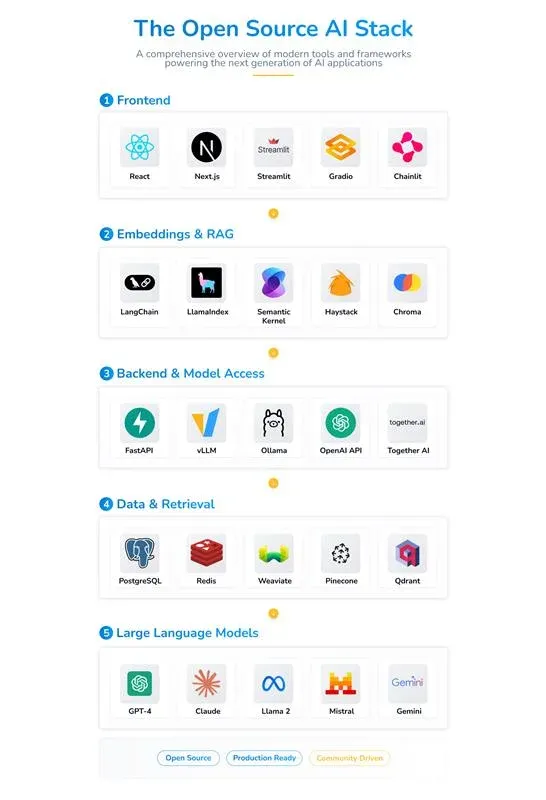

Let’s take a quick tour of the modern open-source AI stack we’re using and recommending for real-world, enterprise-ready solutions:

Frontend: Where User Experience Meets AI

-

A good AI solution needs a good face. And that starts at the frontend.

-

Next.js and Streamlit are two powerhouse frameworks that make building clean, fast, and responsive interfaces incredibly simple—even for teams without a dedicated frontend engineer.

-

You can prototype quickly, visualize data or chatbot outputs, and roll out interactive tools that anyone in the company can use.

-

With platforms like Vercel, deploying and hosting these applications becomes a breeze—no DevOps required.

Use Case Example: A retail client used Next.js + Streamlit to build a customer-facing chatbot interface that could answer product queries using their internal catalog.

Embeddings & RAG Libraries

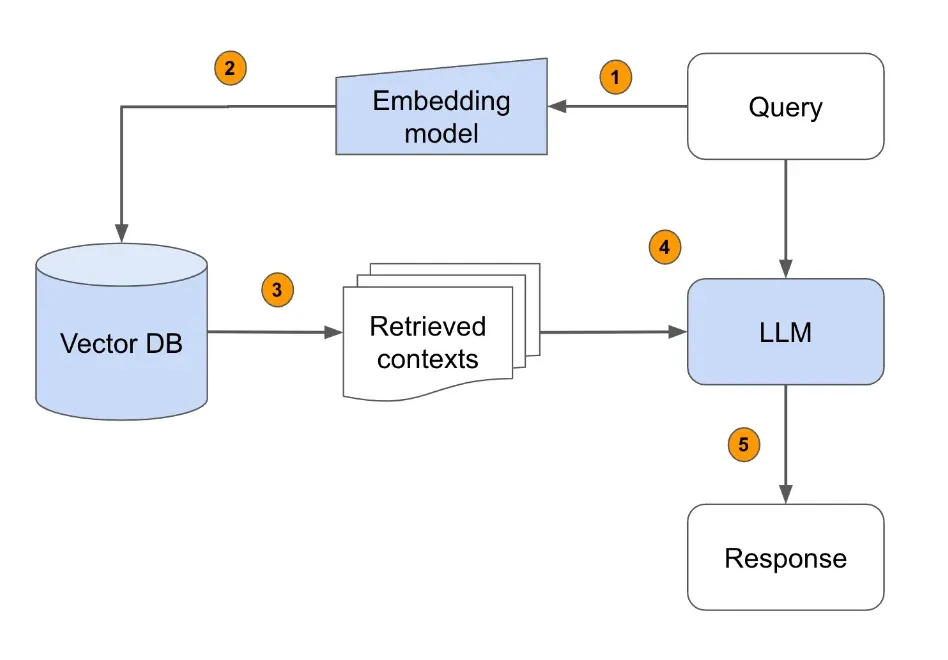

Modern AI isn’t just about what a model was trained on — it’s about what it can access right now.

-

RAG (Retrieval-Augmented Generation) enables models to pull in real-time, factual information by combining LLMs with external databases.

-

Libraries like Nomic, JinaAI, Cognito, and LLMAware help developers implement semantic search and context-aware retrieval pipelines.

-

This means more truthful, up-to-date, and reliable outputs from your models.

Use Case Example: A legal services firm built an internal assistant using RAG + JinaAI to let staff query their own policy documents with human-like fluency and real citations.

Backend & Model Access

Building AI systems is often about stitching many tools together—data inputs, model inference, user interface, analytics, and so on. That’s where orchestration frameworks shine.

-

FastAPI, LangChain, and Netflix Metaflow are popular choices that let teams build complex pipelines with modularity and speed.

-

Want to switch out a model? Route different tasks to different models? Stream outputs to a dashboard? These tools help you do all of that with less code.

-

Platforms like Ollama and Hugging Face make running and loading models easier than ever—whether it’s local deployment or cloud-based.

Use Case Example: A fintech client used LangChain + FastAPI to create a secure data pipeline for a fraud detection system powered by LLaMA.

Vector Databases & Retrieval

Once your AI can access relevant context, you need somewhere fast and efficient to store that knowledge.

Vector databases like Weaviate, Milvus, PGVector, and FAISS are purpose-built to store embeddings—essentially the "memory" of your AI.

-

They allow quick search and matching across massive datasets, improving how models recall relevant info.

-

Even PostgreSQL is evolving to support hybrid search and embedding storage with extensions like PGVector.

Use Case Example: A media company used Milvus to build a recommendation engine based on article embeddings for more personalized content delivery.

Fine-Tuned & Ready for Business

Not all LLMs are created equal. And you don’t have to rely on OpenAI or Anthropic to get state-of-the-art performance.

-

Open-source models like LLaMA, Mistral, Gemma, Qwen, and Phi are rapidly becoming viable alternatives—especially for domain-specific tasks.

-

You can run them privately, fine-tune them for internal data, and avoid handing over sensitive information to a black-box system.

-

With quantization tools like LoRA and QLoRA, it’s even possible to run these models efficiently on modest hardware.

Use Case Example: A healthcare provider deployed a fine-tuned Phi-2 model for triaging patient queries, hosted securely on their internal servers.